MY no 1 recommendation TO CREATE complete TIME earnings online: click on right here

Sitemap auditing involves syntax, crawlability, and indexation checks for the URLs and tags on your sitemap documents.

A sitemap file incorporates the URLs to index with further facts concerning the remaining modification date, precedence of the URL, images, videos at the URL, and other language alternates of the URL, along side the alternate frequency.

Sitemap index files can contain hundreds of thousands of URLs, even if a single sitemap can only contain 50,000 URLs on the top.

Auditing those URLs for better indexation and crawling might take time.

but with the help of Python and seo automation, it’s miles possible to audit hundreds of thousands of URLs in the sitemaps.

What Do You need To perform A Sitemap Audit With Python?

To apprehend the Python Sitemap Audit process, you’ll want:

- A fundamental know-how of technical search engine optimization and sitemap XML documents.

- working knowledge of Python and sitemap XML syntax.

- The capability to work with Python Libraries, Pandas, Advertools, LXML, Requests, and XPath Selectors.

Which URLs have to Be inside the Sitemap?

A healthful sitemap XML sitemap report have to encompass the following standards:

- All URLs ought to have a 200 status Code.

- All URLs ought to be self-canonical.

- URLs ought to be open to being indexed and crawled.

- URLs shouldn’t be duplicated.

- URLs shouldn’t be gentle 404s.

- The sitemap need to have a proper XML syntax.

- The URLs in the sitemap have to have an aligning canonical with Open Graph and Twitter Card URLs.

- The sitemap must have much less than 50.000 URLs and a 50 MB size.

What Are The advantages Of A healthful XML Sitemap file?

Smaller sitemaps are better than larger sitemaps for faster indexation. That is in particular vital in news search engine optimization, as smaller sitemaps help for growing the general valid listed URL rely.

Differentiate frequently updated and static content URLs from each other to offer a better crawling distribution a number of the URLs.

the use of the “lastmod” date in an sincere way that aligns with the real book or update date allows a search engine to agree with the date of the modern day booklet.

while performing the Sitemap Audit for better indexing, crawling, and seek engine communique with Python, the standards above are accompanied.

An vital note…

when it comes to a sitemap’s nature and audit, Google and Microsoft Bing don’t use “changefreq” for changing frequency of the URLs and “precedence” to understand the prominence of a URL. In reality, they name it a “bag of noise.”

however, Yandex and Baidu use a majority of these tags to apprehend the internet site’s characteristics.

A 16-Step Sitemap Audit For seo With Python

A sitemap audit can contain content material categorization, website-tree, or topicality and content material characteristics.

however, a sitemap audit for better indexing and crawlability specifically entails technical search engine optimization rather than content material characteristics.

on this step-by way of-step sitemap audit technique, we’ll use Python to address the technical aspects of sitemap auditing hundreds of thousands of URLs.

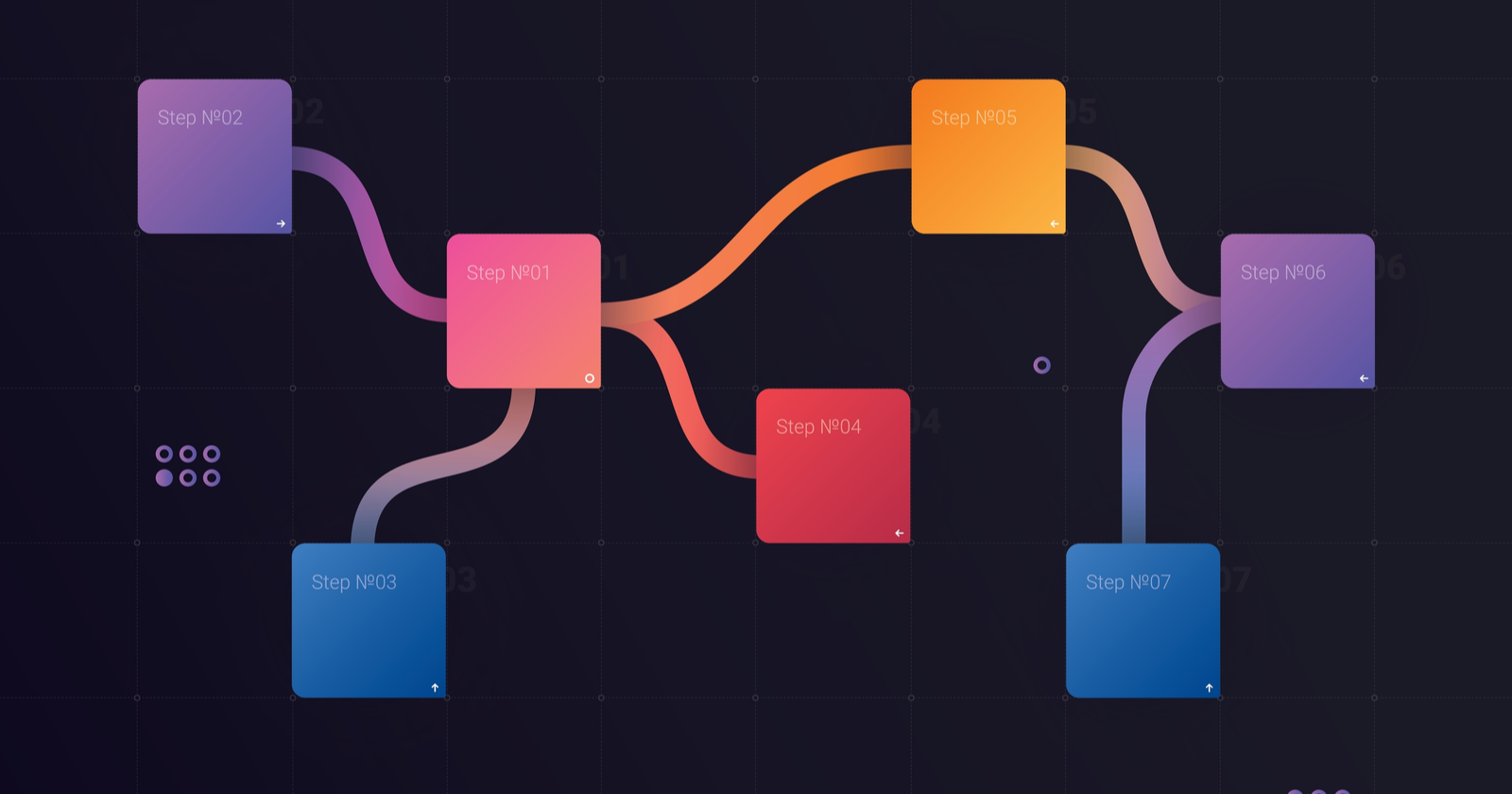

picture created by way of the author, February 2022

picture created by way of the author, February 20221. Import The Python Libraries to your Sitemap Audit

the subsequent code block is to import the necessary Python Libraries for the Sitemap XML record audit.

import advertools as adv&#thirteen;

Import pandas as pd&#thirteen;

&#thirteen;

From lxml import etree&#thirteen;

From IPython.Center.Show import show, HTML&#thirteen;

Show(HTML("<style>.Field width:one hundred% !Essential; </style>"))

right here’s what you need to realize about this code block:

- Advertools is essential for taking the URLs from the sitemap file and making a request for taking their content or the reaction popularity codes.

- “Pandas” is necessary for aggregating and manipulating the facts.

- Plotly is important for the visualization of the sitemap audit output.

- LXML is important for the syntax audit of the sitemap XML report.

- IPython is non-compulsory to enlarge the output cells of Jupyter notebook to one hundred% width.

2. Take all the URLs From The Sitemap

hundreds of thousands of URLs can be taken right into a Pandas data frame with Advertools, as shown under.

sitemap_url = "https://www.Complaintsboard.Com/sitemap.Xml"&#thirteen;

Sitemap = adv.Sitemap_to_df(sitemap_url)

Sitemap.To_csv("sitemap.Csv")&#thirteen;

Sitemap_df = pd.Read_csv("sitemap.Csv", index_col=false)

Sitemap_df.Drop(columns=["Unnamed: 0"], inplace=genuine)

Sitemap_df

Above, the Complaintsboard.Com sitemap has been taken into a Pandas records body, and you may see the output under.

-

A popular Sitemap URL Extraction with Sitemap Tags with Python is above.

In general, we’ve 245,691 URLs within the sitemap index report of Complaintsboard.Com.

The internet site makes use of “changefreq,” “lastmod,” and “precedence” with an inconsistency.

3. Test Tag usage in the Sitemap XML report

To apprehend which tags are used or now not inside the Sitemap XML report, use the feature under.

def check_sitemap_tag_usage(sitemap):&#thirteen;

lastmod = sitemap["lastmod"].Isna().Value_counts()&#thirteen;

precedence = sitemap["priority"].Isna().Value_counts()

changefreq = sitemap["changefreq"].Isna().Value_counts()&#thirteen;

lastmod_perc = sitemap["lastmod"].Isna().Value_counts(normalize = real) * 100&#thirteen;

priority_perc = sitemap["priority"].Isna().Value_counts(normalize = true) * a hundred

changefreq_perc = sitemap["changefreq"].Isna().Value_counts(normalize = actual) * 100&#thirteen;

sitemap_tag_usage_df = pd.DataFrame(data="lastmod":lastmod,

"priority":precedence,

"changefreq":changefreq,&#thirteen;

"lastmod_perc": lastmod_perc,

"priority_perc": priority_perc,

"changefreq_perc": changefreq_perc)&#thirteen;

go back sitemap_tag_usage_df.Astype(int)

The function check_sitemap_tag_usage is a data frame constructor based totally on the use of the sitemap tags.

It takes the “lastmod,” “priority,” and “changefreq” columns by using imposing “isna()” and “value_counts()” methods via “pd.DataFrame”.

underneath, you can see the output.

Sitemap Audit with Python for sitemap tags’ utilization.

Sitemap Audit with Python for sitemap tags’ utilization.The information body above shows that 96,840 of the URLs do now not have the Lastmod tag, that’s equal to 39% of the total URL be counted of the sitemap document.

The equal utilization percent is nineteen% for the “precedence” and the “changefreq” inside the sitemap XML record.

There are 3 primary content material freshness indicators from a internet site.

those are dates from a web page (seen to the person), structured statistics (invisible to the user), “lastmod” in the sitemap.

If those dates aren’t steady with every other, serps can forget about the dates on the websites to peer their freshness signals.

4. Audit The website online-tree And URL shape Of The internet site

knowledge the maximum crucial or crowded URL path is important to weigh the internet site’s search engine optimization efforts or technical seo Audits.

A single development for Technical seo can gain heaps of URLs concurrently, which creates a value-powerful and finances-friendly search engine optimization strategy.

URL shape information in particular focuses on the website’s greater prominent sections and content network analysis knowledge.

To create a URL Tree Dataframe from a internet site’s URLs from the sitemap, use the following code block.

sitemap_url_df = adv.Url_to_df(sitemap_df["loc"]) Sitemap_url_df

With the assist of “urllib” or the “advertools” as above, you may easily parse the URLs inside the sitemap into a statistics body.

-

creating a URL Tree with URLLib or Advertools is simple.

- Checking the URL breakdowns facilitates to understand the general records tree of a website.

The data body above carries the “scheme,” “netloc,” “route,” and each “/” breakdown in the URLs as a “dir” which represents the directory.

Auditing the URL structure of the website is outstanding for two objectives.

those are checking whether or not all URLs have “HTTPS” and knowledge the content material network of the internet site.

content material evaluation with sitemap files isn’t the subject of the “Indexing and Crawling” directly, for this reason at the cease of the thing, we can talk about it barely.

check the next section to look the SSL utilization on Sitemap URLs.

5. Take a look at The HTTPS usage on the URLs inside Sitemap

Use the subsequent code block to test the HTTP usage ratio for the URLs inside the Sitemap.

sitemap_url_df["scheme"].Value_counts().To_frame()

The code block above makes use of a simple records filtration for the “scheme” column which contains the URLs’ HTTPS Protocol information.

the usage of the “value_counts” we see that all URLs are at the HTTPS.

Checking the HTTP URLs from the Sitemaps can help to locate larger URL assets consistency mistakes.

Checking the HTTP URLs from the Sitemaps can help to locate larger URL assets consistency mistakes.6. Test The Robots.Txt Disallow commands For Crawlability

The structure of URLs within the sitemap is beneficial to look whether there is a situation for “submitted however disallowed”.

to look whether or not there may be a robots.Txt document of the internet site, use the code block below.

import requests

R = requests.Get("https://www.Complaintsboard.Com/robots.Txt")&#thirteen;

R.Status_code&#thirteen;

200

certainly, we send a “get request” to the robots.Txt URL.

If the reaction reputation code is 200, it way there may be a robots.Txt record for the consumer-agent-based totally crawling control.

After checking the “robots.Txt” life, we are able to use the “adv.Robotstxt_test” approach for bulk robots.Txt audit for crawlability of the URLs within the sitemap.

sitemap_df_robotstxt_check = adv.Robotstxt_test("https://www.Complaintsboard.Com/robots.Txt", urls=sitemap_df["loc"], user_agents=["*"])

Sitemap_df_robotstxt_check["can_fetch"].Value_counts()

we’ve got created a brand new variable referred to as “sitemap_df_robotstxt_check”, and assigned the output of the “robotstxt_test” approach.

we’ve got used the URLs within the sitemap with the “sitemap_df[“loc”]”.

we’ve got done the audit for all the person-marketers through the “user_agents = [“*”]” parameter and cost pair.

you can see the result below.

genuine 245690&#thirteen; Fake 1 Name: can_fetch, dtype: int64

It suggests that there is one URL this is disallowed however submitted.

we will filter the specific URL as beneath.

pd.Set_option("show.Max_colwidth",255)&#thirteen;

Sitemap_df_robotstxt_check[sitemap_df_robotstxt_check["can_fetch"] == fake]

we’ve got used “set_option” to amplify all the values in the “url_path” segment.

-

A URL appears as disallowed but submitted through a sitemap as in Google search Console coverage reviews.

- We see that a “profile” web page has been disallowed and submitted.

Later, the identical manage may be completed for similarly examinations which include “disallowed however internally related”.

but, to do this, we want to crawl at the least 3 million URLs from ComplaintsBoard.Com, and it could be a completely new guide.

some internet site URLs do not have a proper “directory hierarchy”, that could make the analysis of the URLs, in terms of content material community traits, more difficult.

Complaintsboard.Com doesn’t use a proper URL shape and taxonomy, so reading the website structure isn’t always smooth for an seo or seek Engine.

but the maximum used phrases within the URLs or the content material update frequency can sign which subject matter the organization truely weighs on.

considering that we cognizance on “technical factors” on this academic, you may read the Sitemap content material Audit right here.

7. Test The popularity Code Of The Sitemap URLs With Python

every URL in the sitemap has to have a two hundred status Code.

A move slowly has to be done to check the repute codes of the URLs inside the sitemap.

however, since it’s highly-priced when you have millions of URLs to audit, we will certainly use a brand new crawling technique from Advertools.

without taking the response body, we are able to move slowly simply the response headers of the URLs in the sitemap.

it’s miles beneficial to decrease the move slowly time for auditing viable robots, indexing, and canonical signals from the response headers.

To carry out a response header crawl, use the “adv.Crawl_headers” approach.

adv.Crawl_headers(sitemap_df["loc"], output_file="sitemap_df_header.Jl")

Df_headers = pd.Read_json("sitemap_df_header.Jl", strains=proper)&#thirteen;

Df_headers["status"].Value_counts()

the explanation of the code block for checking the URLs’ repute codes inside the Sitemap XML documents for the Technical seo thing can be seen under.

two hundred 207866 404 23&#thirteen; Name: status, dtype: int64

It suggests that the 23 URL from the sitemap is definitely 404.

And, they have to be eliminated from the sitemap.

To audit which URLs from the sitemap are 404, use the filtration method below from Pandas.

df_headers[df_headers["status"] == 404]

The result can be visible below.

finding the 404 URLs from Sitemaps is useful against link Rot.

finding the 404 URLs from Sitemaps is useful against link Rot.eight. Check The Canonicalization From reaction Headers

on occasion, the usage of canonicalization hints on the response headers is beneficial for crawling and indexing signal consolidation.

on this context, the canonical tag at the HTML and the reaction header needs to be the identical.

If there are specific canonicalization indicators on a web page, the engines like google can ignore both assignments.

For ComplaintsBoard.Com, we don’t have a canonical response header.

- step one is auditing whether or not the reaction header for canonical usage exists.

- the second step is evaluating the reaction header canonical value to the HTML canonical price if it exists.

- The third step is checking whether or not the canonical values are self-referential.

test the columns of the output of the header crawl to test the Canonicalization from reaction Headers.

df_headers.Columns

under, you can see the columns.

Python seo crawl Output statistics body columns. “dataframe.Columns” technique is usually beneficial to test.

Python seo crawl Output statistics body columns. “dataframe.Columns” technique is usually beneficial to test.if you aren’t familiar with the response headers, you could now not understand how to use canonical recommendations inside response headers.

A reaction header can include the canonical hint with the “hyperlink” cost.

it’s miles registered as “resp_headers_link” with the aid of the Advertools without delay.

any other trouble is that the extracted strings appear in the “<URL>;” string pattern.

It approach we can use regex to extract it.

df_headers["resp_headers_link"]

you can see the result underneath.

Screenshot from Pandas, February 2022

Screenshot from Pandas, February 2022The regex sample “[^<>][a-z:/0-9-.]*” is ideal enough to extract the specific canonical cost.

A self-canonicalization check with the response headers is underneath.

df_headers["response_header_canonical"] = df_headers["resp_headers_link"].Str.Extract(r"([^<>][a-z:/0-9-.]*)")&#thirteen; (df_headers["response_header_canonical"] == df_headers["url"]).Value_counts()

we’ve used two one-of-a-kind boolean assessments.

One to test whether the reaction header canonical trace is equal to the URL itself.

another to peer whether or not the fame code is 200.

seeing that we’ve 404 URLs inside the sitemap, their canonical value might be “NaN”.

It suggests there are precise URLs with canonicalization inconsistencies.

It suggests there are precise URLs with canonicalization inconsistencies.- we have 29 outliers for Technical seo. Each wrong signal given to the search engine for indexation or ranking will purpose the dilution of the rating alerts.

to see these URLs, use the code block below.

Screenshot from Pandas, February 2022.

Screenshot from Pandas, February 2022.The Canonical Values from the reaction Headers can be visible above.

df_headers[(df_headers["response_header_canonical"] != df_headers["url"]) & (df_headers["status"] == two hundred)]

Even a single “/” within the URL can purpose canonicalization conflict as appears right here for the homepage.

ComplaintsBoard.Com Screenshot for checking the response Header Canonical cost and the actual URL of the net page.

ComplaintsBoard.Com Screenshot for checking the response Header Canonical cost and the actual URL of the net page.- you could check the canonical conflict right here.

if you take a look at log documents, you’ll see that the search engine crawls the URLs from the “link” reaction headers.

for that reason in technical seo, this ought to be weighted.

9. Take a look at The Indexing And Crawling instructions From reaction Headers

There are 14 distinct X-Robots-Tag specifications for the Google seek engine crawler.

The state-of-the-art one is “indexifembedded” to decide the indexation amount on a web page.

The Indexing and Crawling directives may be within the form of a reaction header or the HTML meta tag.

This phase makes a speciality of the response header model of indexing and crawling directives.

- step one is checking whether or not the X-Robots-Tag property and values exist in the HTTP Header or not.

- the second step is auditing whether it aligns itself with the HTML Meta Tag houses and values if they exist.

Use the command under yo take a look at the X-Robots-Tag” from the reaction headers.

def robots_tag_checker(dataframe:pd.DataFrame):&#thirteen;

for i in df_headers:&#thirteen;

if i.__contains__("robots"):

return i&#thirteen;

else:

return "there's no robots tag"

Robots_tag_checker(df_headers)

OUTPUT>>>

'there is no robots tag'

we’ve created a custom characteristic to test the “X-Robots-tag” reaction headers from the web pages’ supply code.

It seems that our check problem internet site doesn’t use the X-Robots-Tag.

If there might be an X-Robots-tag, the code block beneath should be used.

df_headers["response_header_x_robots_tag"].Value_counts() Df_headers[df_headers["response_header_x_robots_tag"] == "noindex"]

check whether there may be a “noindex” directive from the response headers, and filter the URLs with this indexation warfare.

inside the Google search Console insurance document, the ones appear as “Submitted marked as noindex”.

Contradicting indexing and canonicalization hints and alerts would possibly make a search engine ignore all the indicators while making the hunt algorithms believe less to the user-declared signals.

10. Take a look at The Self Canonicalization Of Sitemap URLs

each URL within the sitemap XML documents need to deliver a self-canonicalization hint.

Sitemaps have to most effective encompass the canonical versions of the URLs.

The Python code block in this phase is to apprehend whether the sitemap URLs have self-canonicalization values or now not.

to check the canonicalization from the HTML documents’ “<head>” section, move slowly the web sites by way of taking their reaction frame.

Use the code block below.

user_agent = "Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X construct/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z cell Safari/537.36 (like minded; Googlebot/2.1; +http://www.Google.Com/bot.Html)"

The difference between “crawl_headers” and the “crawl” is that “move slowly” takes the complete response frame whilst the “crawl_headers” is best for response headers.

adv.Move slowly(sitemap_df["loc"],&#thirteen; Output_file="sitemap_crawl_complaintsboard.Jl",&#thirteen; &#thirteen; Follow_links=false,&#thirteen; &#thirteen; Custom_settings="LOG_FILE":"sitemap_crawl_complaintsboard.Log", “USER_AGENT”:user_agent)

you can check the record size variations from move slowly logs to response header crawl and entire reaction frame move slowly.

Python crawl Output length evaluation.

Python crawl Output length evaluation.From 6GB output to the 387 MB output is pretty most economical.

If a search engine just desires to see positive reaction headers and the popularity code, developing statistics on the headers might make their move slowly hits extra budget friendly.

a way to cope with huge DataFrames For reading And Aggregating statistics?

This segment requires coping with the big facts frames.

A computer can’t read a Pandas DataFrame from a CSV or JL report if the report size is bigger than the laptop’s RAM.

as a result, the “chunking” approach is used.

when a website sitemap XML report consists of tens of millions of URLs, the full crawl output can be larger than tens of gigabytes.

An new release across sitemap move slowly output facts frame rows is essential.

For chunking, use the code block under.

df_iterator = pd.Read_json(&#thirteen;

&#thirteen;

'sitemap_crawl_complaintsboard.Jl',

chunksize=10000,&#thirteen;

strains=genuine)&#thirteen;

For i, df_chunk in enumerate(df_iterator):&#thirteen;

output_df = pd.DataFrame(facts="url":df_chunk["url"],"canonical":df_chunk["canonical"], "self_canonicalised":df_chunk["url"] == df_chunk["canonical"])

mode="w" if i == 0 else 'a'&#thirteen;

&#thirteen;

header = i == zero

output_df.To_csv(

"canonical_check.Csv",

index=false,

&#thirteen;

header=header,

mode=mode

)

&#thirteen;

Df[((df["url"] != df["canonical"]) == actual) & (df["self_canonicalised"] == fake) & (df["canonical"].Isna() != real)]

you may see the result under.

Python seo Canonicalization Audit.

Python seo Canonicalization Audit.We see that the paginated URLs from the “ebook” subfolder deliver canonical guidelines to the primary page, that’s a non-correct practice in line with the Google suggestions.

11. Check The Sitemap Sizes within Sitemap Index documents

every Sitemap file ought to be much less than 50 MB. Use the Python code block underneath inside the Technical search engine optimization with Python context to check the sitemap report length.

pd.Pivot_table(sitemap_df[sitemap_df["loc"].Duplicated()==real], index="sitemap")

you could see the end result below.

Python seo Sitemap size Audit.

Python seo Sitemap size Audit.We see that every one sitemap XML documents are beneath 50MB.

For higher and quicker indexation, retaining the sitemap URLs treasured and precise whilst lowering the size of the sitemap documents is useful.

12. Take a look at The URL be counted in step with Sitemap With Python

every URL within the sitemaps need to have fewer than 50.000 URLs.

Use the Python code block under to test the URL Counts inside the sitemap XML files.

(pd.Pivot_table(sitemap_df,&#thirteen; Values=["loc"], Index="sitemap", Aggfunc="count number") &#thirteen; .Sort_values(by="loc", ascending=fake))

you may see the result underneath.

Python search engine optimization Sitemap URL be counted Audit.

Python search engine optimization Sitemap URL be counted Audit.- All sitemaps have much less than 50.000 URLs. A few sitemaps have most effective one URL, which wastes the search engine’s interest.

maintaining sitemap URLs that are often updated extraordinary from the static and stale content material URLs is useful.

URL depend and URL content man or woman differences assist a seek engine to regulate crawl demand efficaciously for extraordinary internet site sections.

13. Test The Indexing And Crawling Meta Tags From URLs’ content material With Python

even if a web web page is not disallowed from robots.Txt, it is able to nonetheless be disallowed from the HTML Meta Tags.

as a consequence, checking the HTML Meta Tags for better indexation and crawling is vital.

the usage of the “custom selectors” is vital to carry out the HTML Meta Tag audit for the sitemap URLs.

sitemap = adv.Sitemap_to_df("https://www.Holisticseo.Virtual/sitemap.Xml")

Adv.Crawl(url_list=sitemap["loc"][:1000], output_file="meta_command_audit.Jl",

&#thirteen;

Follow_links=false,&#thirteen;

&#thirteen;

Xpath_selectors= "meta_command": "//meta[@name="robots"]/@content material",&#thirteen;

Custom_settings="CLOSESPIDER_PAGECOUNT":1000)&#thirteen;

Df_meta_check = pd.Read_json("meta_command_audit.Jl", strains=proper)

Df_meta_check["meta_command"].Str.Incorporates("nofollowauthentic).Value_counts()

The “//meta[@name=”robots”]/@content” XPATH selector is to extract all the robots commands from the URLs from the sitemap.

we’ve got used only the first 1000 URLs in the sitemap.

And, I stop crawling after the preliminary one thousand responses.

i’ve used every other internet site to test the Crawling Meta Tags when you consider that ComplaintsBoard.Com doesn’t have it at the supply code.

you may see the result below.

Python seo Meta Robots Audit.

Python seo Meta Robots Audit.- not one of the URLs from the sitemap have “nofollow” or “noindex” inside the “Robots” instructions.

to test their values, use the code underneath.

df_meta_check[df_meta_check["meta_command"].Str.Contains("nofollowtrue) == false][["url", "meta_command"]]

you can see the result underneath.

Meta Tag Audit from the web sites.

Meta Tag Audit from the web sites.14. Validate The Sitemap XML document Syntax With Python

Sitemap XML record Syntax validation is vital to validate the integration of the sitemap file with the hunt engine’s perception.

even though there are certain syntax errors, a search engine can recognize the sitemap document at some stage in the XML Normalization.

however, every syntax error can decrease the performance for certain tiers.

Use the code block beneath to validate the Sitemap XML file Syntax.

def validate_sitemap_syntax(xml_path: str, xsd_path: str)&#thirteen;

xmlschema_doc = etree.Parse(xsd_path)&#thirteen;

xmlschema = etree.XMLSchema(xmlschema_doc)&#thirteen;

xml_doc = etree.Parse(xml_path)

result = xmlschema.Validate(xml_doc)&#thirteen;

go back result&#thirteen;

Validate_sitemap_syntax("sej_sitemap.Xml", "sitemap.Xsd")

For this example, i’ve used “https://www.Searchenginejournal.Com/sitemap_index.Xml”. The XSD file entails the XML record’s context and tree structure.

it is said inside the first line of the Sitemap record as below.

For further facts, you could also test DTD documentation.

15. Check The Open Graph URL And Canonical URL Matching

It isn’t a mystery that search engines like google and yahoo additionally use the Open Graph and RSS Feed URLs from the source code for similarly canonicalization and exploration.

The Open Graph URLs need to be similar to the canonical URL submission.

sometimes, even in Google find out, Google chooses to use the image from the Open Graph.

to check the Open Graph URL and Canonical URL consistency, use the code block underneath.

for i, df_chunk in enumerate(df_iterator):

&#thirteen;

if "og:url" in df_chunk.Columns:

&#thirteen;

output_df = pd.DataFrame(statistics=&#thirteen;

"canonical":df_chunk["canonical"],

&#thirteen;

"og:url":df_chunk["og:url"],

&#thirteen;

"open_graph_canonical_consistency":df_chunk["canonical"] == df_chunk["og:url"])

mode="w" if i == 0 else 'a'

&#thirteen;

header = i == zero

output_df.To_csv(&#thirteen;

"open_graph_canonical_consistency.Csv",&#thirteen;

index=false,

header=header,&#thirteen;

&#thirteen;

mode=mode&#thirteen;

)&#thirteen;

else:&#thirteen;

&#thirteen;

print("there is no Open Graph URL assets")

there's no Open Graph URL belongings

If there may be an Open Graph URL assets on the website, it will give a CSV file to test whether the canonical URL and the Open Graph URL are the equal or not.

but for this internet site, we don’t have an Open Graph URL.

thus, i have used some other website for the audit.

if "og:url" in df_meta_check.Columns:

output_df = pd.DataFrame(statistics=&#thirteen;

"canonical":df_meta_check["canonical"],

"og:url":df_meta_check["og:url"],

&#thirteen;

"open_graph_canonical_consistency":df_meta_check["canonical"] == df_meta_check["og:url"])

mode="w" if i == zero else 'a'&#thirteen;

#header = i == zero

output_df.To_csv(&#thirteen;

"df_og_url_canonical_audit.Csv",

&#thirteen;

index=fake,&#thirteen;

&#thirteen;

#header=header,&#thirteen;

mode=mode&#thirteen;

)

Else:

&#thirteen;

print("there's no Open Graph URL property")

&#thirteen;

Df = pd.Read_csv("df_og_url_canonical_audit.Csv")&#thirteen;

Df

you can see the result underneath.

Python search engine optimization Open Graph URL Audit.

Python search engine optimization Open Graph URL Audit.We see that all canonical URLs and the Open Graph URLs are the same.

sixteen. Take a look at The replica URLs inside Sitemap Submissions

A sitemap index record shouldn’t have duplicated URLs across one-of-a-kind sitemap documents or in the equal sitemap XML report.

The duplication of the URLs inside the sitemap files can make a search engine down load the sitemap documents much less considering a certain percent of the sitemap report is bloated with useless submissions.

For certain situations, it may appear as a spamming try to manipulate the crawling schemes of the hunt engine crawlers.

use the code block below to test the reproduction URLs inside the sitemap submissions.

sitemap_df["loc"].Duplicated().Value_counts()

you can see that the 49574 URLs from the sitemap are duplicated.

Python search engine optimization Duplicated URL Audit from the Sitemap XML documents

Python search engine optimization Duplicated URL Audit from the Sitemap XML documentsto see which sitemaps have greater duplicated URLs, use the code block below.

pd.Pivot_table(sitemap_df[sitemap_df["loc"].Duplicated()==genuine], index="sitemap", values="loc", aggfunc="be counted").Sort_values(by means of="loc", ascending=false)

you can see the result.

Python seo Sitemap Audit for duplicated URLs.

Python seo Sitemap Audit for duplicated URLs.Chunking the sitemaps can assist with website-tree and technical seo analysis.

to peer the duplicated URLs within the Sitemap, use the code block beneath.

sitemap_df[sitemap_df["loc"].Duplicated() == real]

you can see the end result underneath.

Duplicated Sitemap URL Audit Output.

Duplicated Sitemap URL Audit Output.conclusion

I wanted to expose the way to validate a sitemap file for higher and healthier indexation and crawling for Technical seo.

Python is hugely used for facts technology, device mastering, and natural language processing.

but, you could additionally use it for Technical seo Audits to aid the opposite seo Verticals with a Holistic seo method.

In a destiny article, we will extend these Technical seo Audits further with extraordinary details and techniques.

however, in wellknown, this is one of the maximum complete Technical search engine optimization guides for Sitemaps and Sitemap Audit educational with Python.

extra assets:

Featured image: elenasavchina2/Shutterstock

MY number 1 advice TO CREATE full TIME earnings on line: click on here